The Code Matrix Nobody Will Write

AI is taking the labor that taught me to be a fire engineer. The seal stays human. The judgment behind the seal is the part I'm worried about.

I learned fire engineering the hard way. Code conformance studies nobody else read. FDS runs that crashed three nights in a row at 3 a.m. because of a single bad mesh resolution. Alternative-means-and-methods packages where I spent two weeks tracing why one IBC paragraph cross-referenced an NFPA appendix that pointed back to a CBC amendment that — once you read it carefully — meant the opposite of what the design team thought.

Most of that work is now done by software. Not next year. Now.

This is the part of the AI conversation that doesn't make the headlines. Whole-occupation replacement is a slow, regulated, hard problem. Task replacement is a Tuesday. And the tasks AI is taking from licensed engineers right now are the same tasks that, twenty years ago, taught me how to be one.

The seal stays human. Every regulator I read is converging on that answer — the National Society of Professional Engineers, the National Council of Examiners, the European Commission, every state board I've checked with. Machines may draft. Humans must seal. Responsible charge is reserved to a licensed person who can stand behind the work in court.

That's the easy half of the answer. The harder half is what holds up the seal.

The first draft was the training program

When I was a junior engineer, the first couple years were not really billable in any honest sense. I was being paid to learn. The vehicle was first-draft work: somebody handed me a fire alarm narrative or a smoke control performance criteria document, and I spent five hours producing something a senior engineer would spend forty-five minutes correcting.

Nobody read those documents in the way I had to read them to write them.

The reason firms tolerated that economic arrangement is that the corrections were the curriculum. After enough corrections, you stopped writing certain sentences. You stopped citing certain code paths. You started seeing the load path, the egress path, the smoke layer, the heat release curve, the AHJ's actual concern under the polite cover letter. By year five, you could read a project narrative in fifteen minutes and tell whether the building was going to pass plan check.

That capability has a name in the literature. We call it judgment. It is the thing the seal warrants.

It does not show up in models. It does not show up in checklists. The OECD's review of AI at work captured the texture as well as anyone has: AI raises performance inside the boundaries it was trained on, and degrades it outside those boundaries. They call it the jagged frontier. Anyone who has ever run a fire model in an unusual geometry knows the feeling — the simulation runs, the colors look right, the numbers come out, and the whole thing is quietly wrong because the assumptions don't fit the building.

A senior engineer can feel that wrongness in twenty seconds. A junior engineer cannot. The difference is the pile of bad first drafts behind them.

What ambient automation actually does

The clearest evidence I have for what AI is going to do to engineering offices comes from a different profession. A multicenter study across six U.S. health systems showed that ambient AI scribes — software that listens to the patient encounter and produces the clinical note — reduced clinician burnout from 51.9% to 38.8% in thirty days. Less documentation. Less after-hours work. More attention for the patient.

That is a wonderful result for the clinician sitting in front of the patient today. It is also the exact mechanism that, if extrapolated to engineering, removes the work that built me.

The 2024 NSPE Board of Ethical Review case on AI in engineering practice is worth reading in full. The ethics violation was not using AI to draft plans. It was using AI to draft plans without maintaining responsible charge over the result. That distinction sounds technical. In practice it is the entire problem of the next decade. To maintain responsible charge over an AI-generated set of code conformance findings, you have to be able to spot what the AI got subtly wrong. To spot what it got subtly wrong, you have to have seen the wrongness before — usually because you produced it yourself, in the prior version of your career, and somebody senior corrected you.

If the senior engineer never produced the first draft, where does the eye for wrongness come from?

Three things AI is taking that I hope it doesn't

I am not going to argue that AI shouldn't be used in fire engineering. We use it. It is, on balance, an enormous gift to a discipline that is structurally short-staffed. Inspection capacity, plan-review backlog, retrofit modeling for existing buildings — these are problems where more leverage from AI means more buildings hardened. I want that.

But there are three categories of first-draft labor I am genuinely worried about losing, because they are how the field manufactures judgment.

The first is the code matrix. The literal spreadsheet a junior engineer builds when they are trying to figure out how the IBC, the CBC, the LAMC amendments, NFPA 13, NFPA 72, and the local fire department's interpretive memos actually fit together for a specific occupancy. AI is now extraordinary at producing the matrix. It is also extraordinary at producing a matrix that confidently contains a citation that doesn't say what it appears to say. The act of building the matrix yourself is what teaches you which paragraphs are quiet about the thing they are about.

The second is the model that lies. Smoke control modeling, structural fire engineering analysis, egress modeling — these tools all converge in a few seconds and produce answers that look authoritative. They are also wrong, often, in ways that don't surface unless someone with field experience squints at the boundary conditions. The training data for that squint is hours spent watching your own model produce nonsense and walking back through your assumptions to find why. Cleaning up after your own bad model is how engineers learn that simulation is not the same thing as physics.

The third is the AHJ negotiation. The performance-based design package or alternative-means-and-methods study where the real deliverable isn't the analysis — it's the document that gets a particular fire marshal to a yes. AI can draft the analysis. AI cannot, yet, learn that this particular AHJ doesn't trust egress modeling and wants to see the time-out tabulation done the long way. That kind of knowledge passes from senior engineer to junior engineer over coffee, in the car on the way to a meeting, on the third comment-response cycle. If juniors stop doing the analysis themselves, they don't ride along on those cycles. They miss the curriculum.

What the next ten years actually decide

Here is the part I'm uncertain about, because I think the field is uncertain about it.

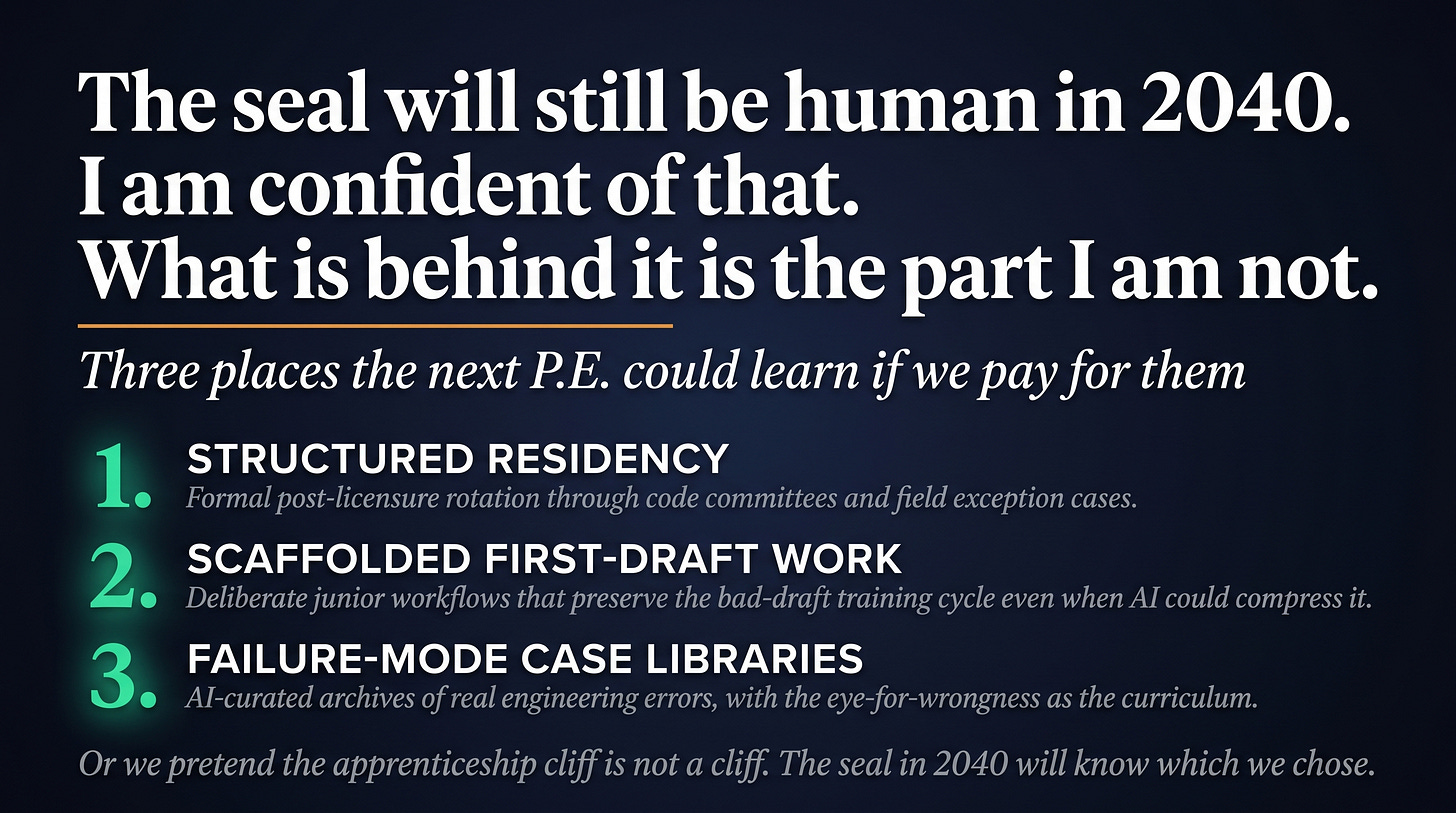

It is possible that we will scaffold judgment some other way — explicit simulation programs, structured residency models like medicine, mandatory rotations through code-development committees, AI-curated case libraries that surface the kinds of wrongness a junior needs to see. Some of that is already happening. The medical literature has clearly thought harder about this than the engineering literature has, mostly because doctors have been arguing about residency for a hundred years and we haven't.

It is also possible that we will pretend the problem doesn't exist for fifteen years, until a class of P.E.s reaches the seniority where they're sealing major projects, and somebody notices that the foundational pattern recognition isn't there. That outcome doesn't show up as one big failure. It shows up as a slow drift in the quality of plan reviews, a slow rise in litigation around AI-mediated engineering work, a slow erosion of the trust that lets a fire marshal accept a performance-based design at face value. By the time anyone identifies the trend, the apprenticeship cohort that would have been the senior engineers is already past the formative window.

I would change my mind on the optimistic story if I saw firms — including ours — doing two things. Building deliberate junior workflows that preserve the bad first-draft labor as a training ritual, even when AI could do it faster. And paying for the slack. Right now the economic incentive is to use AI to compress the junior cohort, which is exactly the move that hollows out the next senior cohort.

The seal will still be human in 2040. I am confident of that. What I'm not confident of is what's behind it.

If you've spent the last twenty years doing the kind of work AI is now doing in twenty seconds — codes consulting, fire modeling, performance-based design analysis — your judgment was paid for in long hours nobody else saw. The question that should keep firm leadership up at night is not whether AI will replace fire engineers. It won't. It's whether the next fire engineer is going to learn the same way you did, and if not, who is going to underwrite their education — and who is going to insure the buildings their seal is on.

That's the cliff. We're standing on it right now.

Exactly so. It was 5 years nursing and 2 years PICU at UCLA to grok this.

I think I need to read Herbert’s Butlerian Jihad.